More prototype progress

For quite a while, we were stuck with our launcher prototype. It wasn’t shooting consistently, with the notes occasionally flipping in the air and with irregular trajectories. Eventually, however, we did discover a few issues. First, we added another set of rollers before the flywheel. These additional rollers improved the consistency of the note’s angle as it entered the flywheel. They ensure the note is level relative to the flywheel. Next, we actually tuned PID for the flywheel. This helped us ensure consistent testing as the flywheel’s speed was more predictable. Finally, we reduced the horizontal compression of the note. It’s now only compresses the note 1/16th of an inch on either side of the launcher. With less compression, the note maintains a more circular shape as it proceeds through the launcher.

Our launcher is now much more consistent

We also tested out our launcher’s amp scoring capabilities. Inspired by Team 4481 Team Rembrandts, we decided to try out scoring into the amp by reversing some rollers. This method worked pretty well with our design.

With these tests, we found our flywheels would push the note up towards the top of the amp and keep it from scoring down. However, once we moved away, the note did fall right in.

CAD Progress

After inspecting a bit more climb geometry, we realized that our selected climb design would have difficulties lifting our robot high enough to allow our launcher to score into the trap. We’ve also been paying close attention to threads here on Chief Delphi discussing shooting into the trap from the ground. With these developments, we decided to pivot on our climb decision. Rather than employ a separate climb subsystem, we’re attaching two hook systems to our launcher. We’ll use two pivots off our launcher with hooks attached at a distance.

We expect to be able to reach at least 3 feet from the ground with this design, allowing us to climb along most of the chain. We also expect this design to allow at least one other robot to climb along with us on the same chain, although having all three robots on the same chain seems unlikely. We plan to shoot into the trap throughout the duration of the match. Once we have our stage built, we’ll do some more launcher testing with it, and we hope to achieve decent accuracy.

Code Progress

Our programming team is progressing along well. Our programmers are split between subsystems right now. I’ll include details on some of the interesting developments

Drivebase

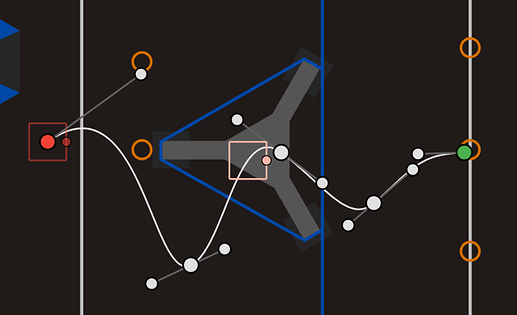

We’ve continued our experiments with Choreo and PathPlanner for autonomous. We created a few diagnostic paths for us to tune our odometry and PID on. These paths consist of a marked starting location (we use a tape square on our field), a difficult trajectory (mostly spinning or capricious translation), and then a return to the start location. Using these paths, we can identify what kinds of movements mess up our odometry the most. Then, we plan to identify methods to reduce odometry error.

This path featured lots of rotating which messed up our odometry a lot.

Vision

This year we’re not only continuing our AprilTag vision exploration, we’re also branching into object detection with Limelight’s neural network interface. We plan to include AprilTag cameras on the front of the robot (the same side as where the notes are launched from) and our Limelight for object detection on the back of the robot.

Since we’ll generally be facing our targets when lining up to launch, we decided on only placing AprilTag cameras on the front of the robot. This might come back to bite us if this isn’t enough data for accurate odometry correction, but we’ll see.

We also decided on really only using object detection for our autonomous pathing. We plan on using object detection to both improve our pathing toward game objects, and to see if game objects are really there before we path towards them. Since this game has a set of game pieces that are accessible by either alliance, we want to make sure the game pieces are really still there before we spend time moving towards them. If game pieces have already been taken, we’ll instead switch to a different autonomous trajectory. Since we expect to always be facing towards the speaker during auto and therefore the center line game pieces will always be facing the back of our bot, we decided to only put game object detection cameras on the back of the robot.

Climb

Previously to our decision to nix the separated climb subsystem, we had a climb programming group. Now that climb is integrated with the launcher, we’ve moved those programmers into our intake group. They’re helping integrate sensors into the design.

Okay, that’s about all the updates I have to share. More to come soon!